Know Thy Selfie: Identifying Myself in Pictures Using DeepFace

Where Am I!?

In dating apps, social media, and some other instances, it can be really helpful to have all the photos that are of you strictly separated from other pictures on your phone. Do you really want to scroll past the 1800 pictures you took of pasta or your cat in the past 3 years to find the best visual representations of yourself? I explored facial verification capabilities and learned about their common shortcomings by writing a Python script to partition 4187 photos I took on vacation into pictures of me / not pictures of me. The Jupyter notebook can be read in full here. This project wasn’t necessarily about creating the optimal facial recognition pipeline, but solving a problem I had through some code and light data science whilst learning a bit about facial verification along the way.

Detector Models, and Vector Embeddings

I didn’t know much about facial recognition prior to this project. I vaguely understood that open source models have been built by think-tanks, universities, or companies to numerically represent a face. From my learning, I understand now these models typically rely on neural network transformations to represent faces by vector embeddings, which can reach lengths of up to 4096. Can you think of how to describe your face in 4096 different numbers? Vector embeddings aren’t human-readable. This is a shortcoming of neural networks: they’re a bit of a black box. They can be used to solve very complex problems, but how they reach their output is obfuscated, and thereby we are unable to trace the meaning of the numbers they spit out.

Prior to this, there’s something else happening in the back end. Another convolutional neural network scans over the image to detect possible faces. Through much training data, this model has learned what the general shape of a human face is. It scans through the image for what could be interpreted as a face. Based on model parameters, if it is confident enough in its identifications, it will crop to these faces, apply “alignment” transformations (rotate, skew, etc.) so that the face is right-side up and looking directly at the camera as much as it can and then pass that along to the vector embedding model.

Here’s a video that I think does a great job explaining the technical process without going in the weeds too much:

There are a variety of Python packages that simplify this two-prong process by incorporating many of these models into one unified package. The one I used for my project was DeepFace. I chose to use this package as it had an active and well-explained Github repo with many vignettes of use cases.

My Methodologies

Being a beginner to the space, I had a lot of theories on how I could leverage the tools in DeepFace to separate the photos of myself from my corpus. They all relied on calculating some distance metric between the observed faces in my corpus, but they differed in their preprocessing steps, if any. To improve results, I could apply grayscale, heighten contrast, create an aggregate vector embedding of my face, reduce dimensionality by way of principal component analysis, etc. With all the options at my feet, I figured it would just be easiest to start with (what I thought) was the simplest solution and revise as needed.

Method 1: Aggregated Vector Embedding of My Face.

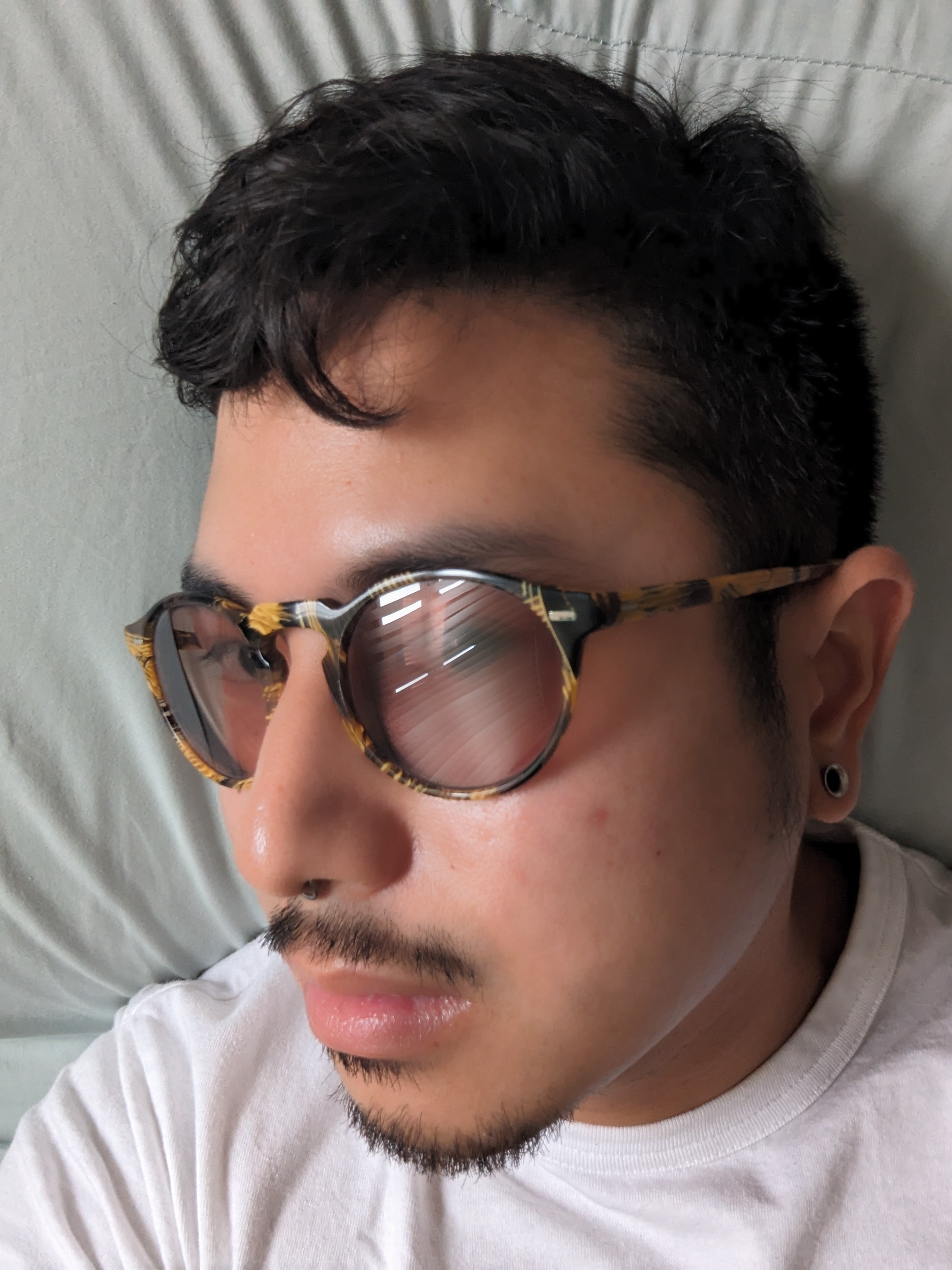

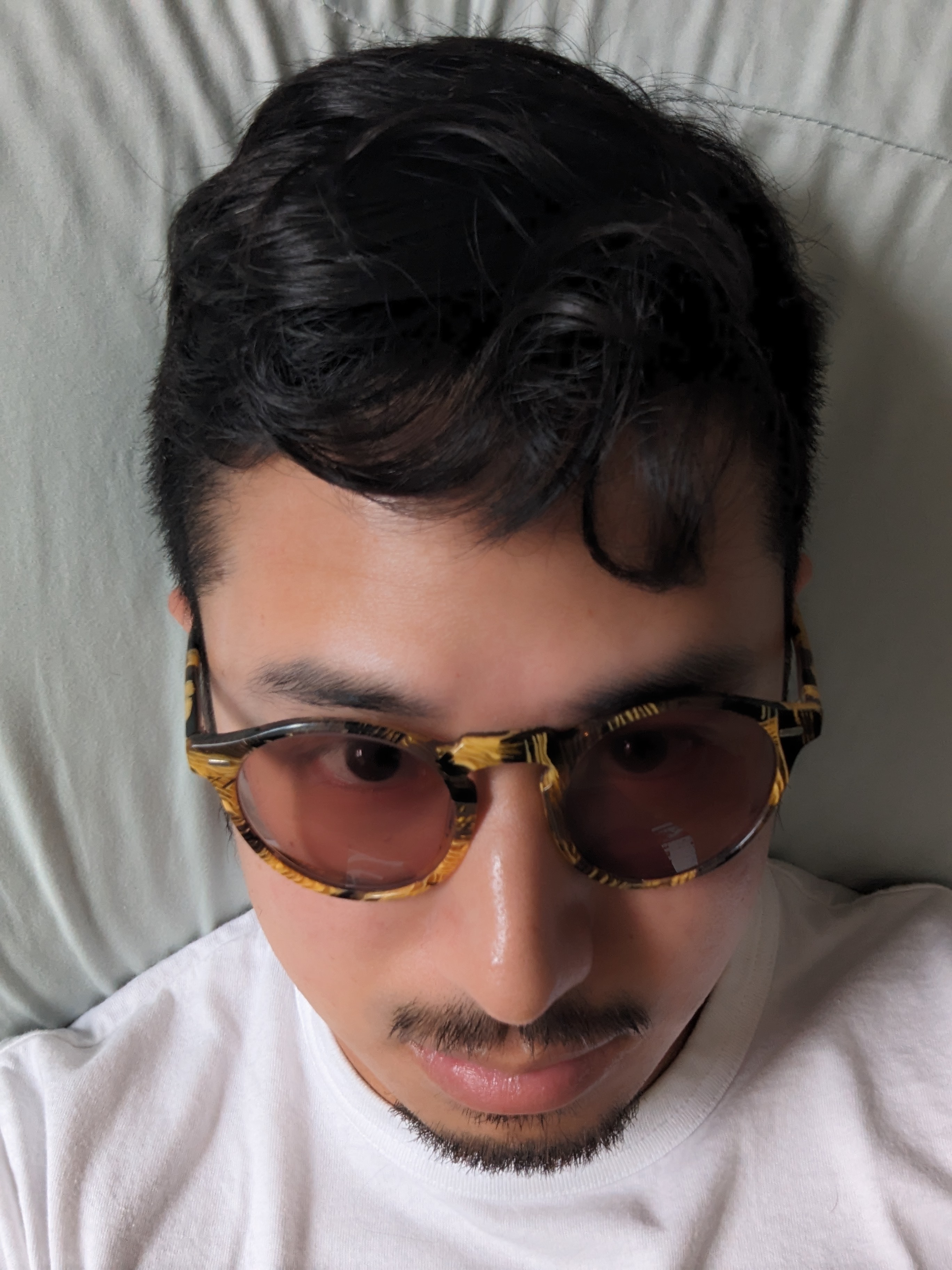

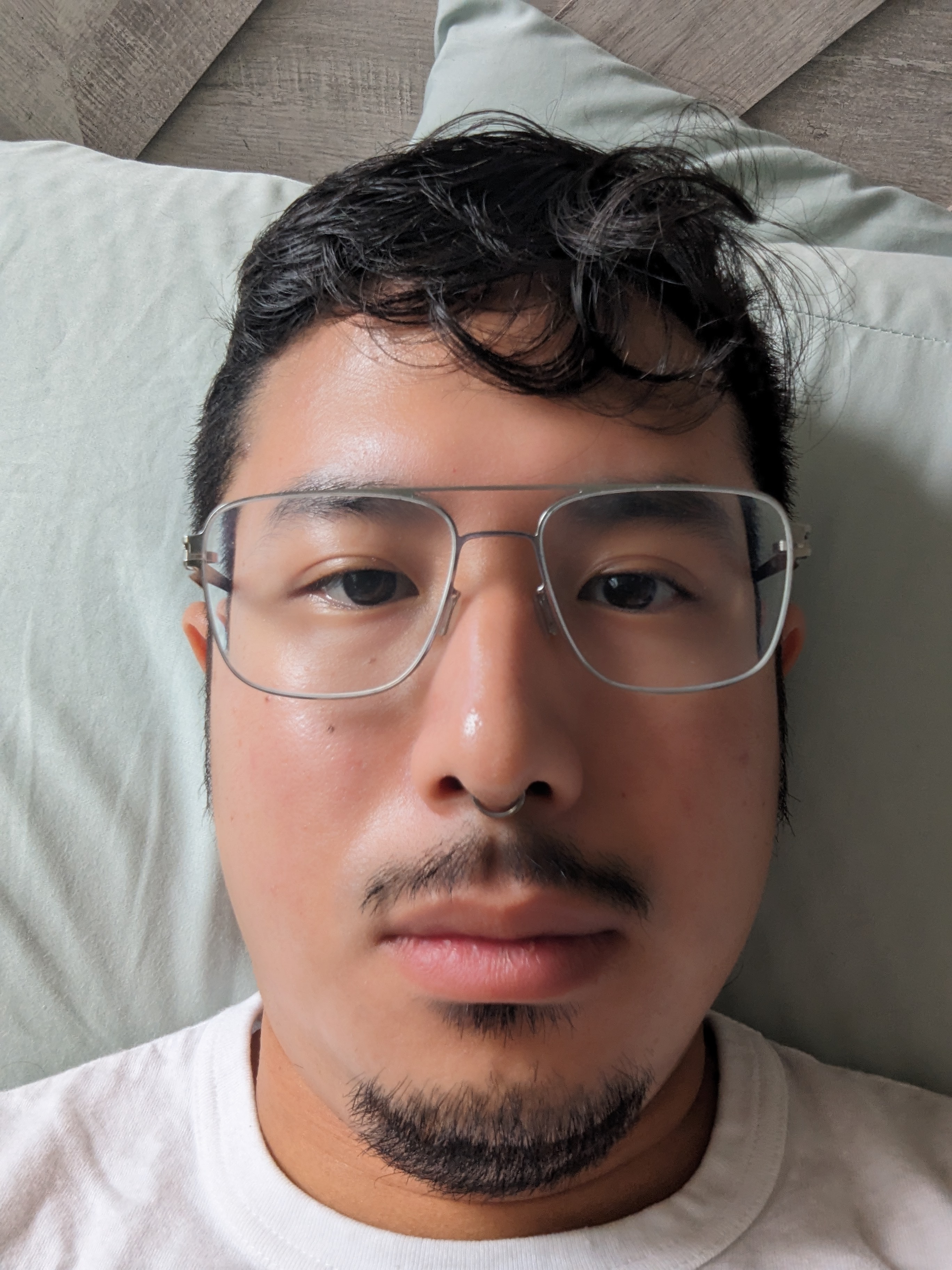

As previously mentioned, these models create a vector embedding per face. But one face doesn’t necessarily look the same in every context. I wear glasses, and I’ve worn a lot of different glasses over the years, and sometimes (in the pool) I might even take my glasses off. Also, sometimes my face is not exactly pointed straight at the camera, or sometimes I’m a bit in shadow (cause I know photography and how nice people look when they’re short-lit). If I had a lot of these types of instances in my corpus, maybe there was someway to maximize the efficiency of the vector embedding that I calculated the distance metric from? This theory informed my first strategy: I took 20 photos of my face: 5 wearing sunglasses and looking straight, up, down, left, and right. Another 5 doing the same without my new glasses. Another 5 with my old glasses. And a final 5 without glasses. These were my “training” images. For these 20 faces, I used DeepFace to generate their 4096-dimensional vector embeddings. Then I created an average vector-embedding across all 20. In theory, this creates some amalgamated representation of my face that I believed would be robust for a variety of comparisons.

Then, for all 4187 photos in my test corpus, I generated vector embeddings from the faces in those. For all photos that succeeded in generating at least one vector embedding, I moved them to a sub folder in their directory called has_faces. This is because all other photos were not actually pictures of people (in theory), so I wouldn’t need to continue including them in this project. This method generated some false positives / false negatives or otherwise interesting results.

The results were not as promising as I’d hoped given DeepFace’s false positive and negatives. Still, I calculated the Euclidean distance between my average embedded vector and all other vectors. In theory, the pictures with the least distance should be of me, and those with the most distance should not be of me, but I found these classes represented on both ends of the spectrum. I chose a distance of 0.969 as a threshold from a glance and wound up with these results.

I won’t say they were equally represented, my method worked to some degree as there was some more representation of me at lower values of Euclidean distance, but there were enough pictures that weren’t of me (and obvious pictures of me on the high end) that I didn’t find this method to be suitable.

For photos containing multiple faces, I determined match by the lowest distance scoring face. I tried this method again using cosine distance as my distance metric after reading this could be more reliable in facial verification, but this did not prove useful either.

Method 2 - Singular Vector Representation and Leveraging DeepFace

I started to think, maybe creating an aggregate representation of my face wasn’t ideal. Imagine compressing 20 different photos of you - looking different each time - into one. Would this give someone an accurate idea of what you look like, or ultimately just be a little confusing? Hard to say, and perhaps my analogy isn’t perfect. Vector embeddings aren’t like pictures, they’re measurements of features in a large dimensional space. A vector average could be the right approach, given the pictures are good. It didn’t seem like taking my vector average from so many different facial orientations and glasses was really doing me any favors.

So then, I decided to calculate distance metrics between one photo I thought best represented my face (looking straight ahead, no glasses), and all others. Also, rather than calculating all the distance metrics manually, I leveraged DeepFace methods. This did everything I did before in fewer lines of code and decided if the faces were a match by their own distance threshold. One of the critical benefits was that I could output the decision, the distance, and the threshold to a dictionary output, so I could later adjust the threshold and change the decision if I felt the model parameters were too lenient or strict on what a match was.

This provided some pretty reliable results! This method identified 655 photos of me with only 1 false positive. Then I was left with 578 photos that weren’t tagged as me with 150 false negatives (some of that is understandable - face obstructed, in shadows, etc). I had to think of a way of grabbing the rest of the photos of me without grabbing all the photos that weren’t of me.

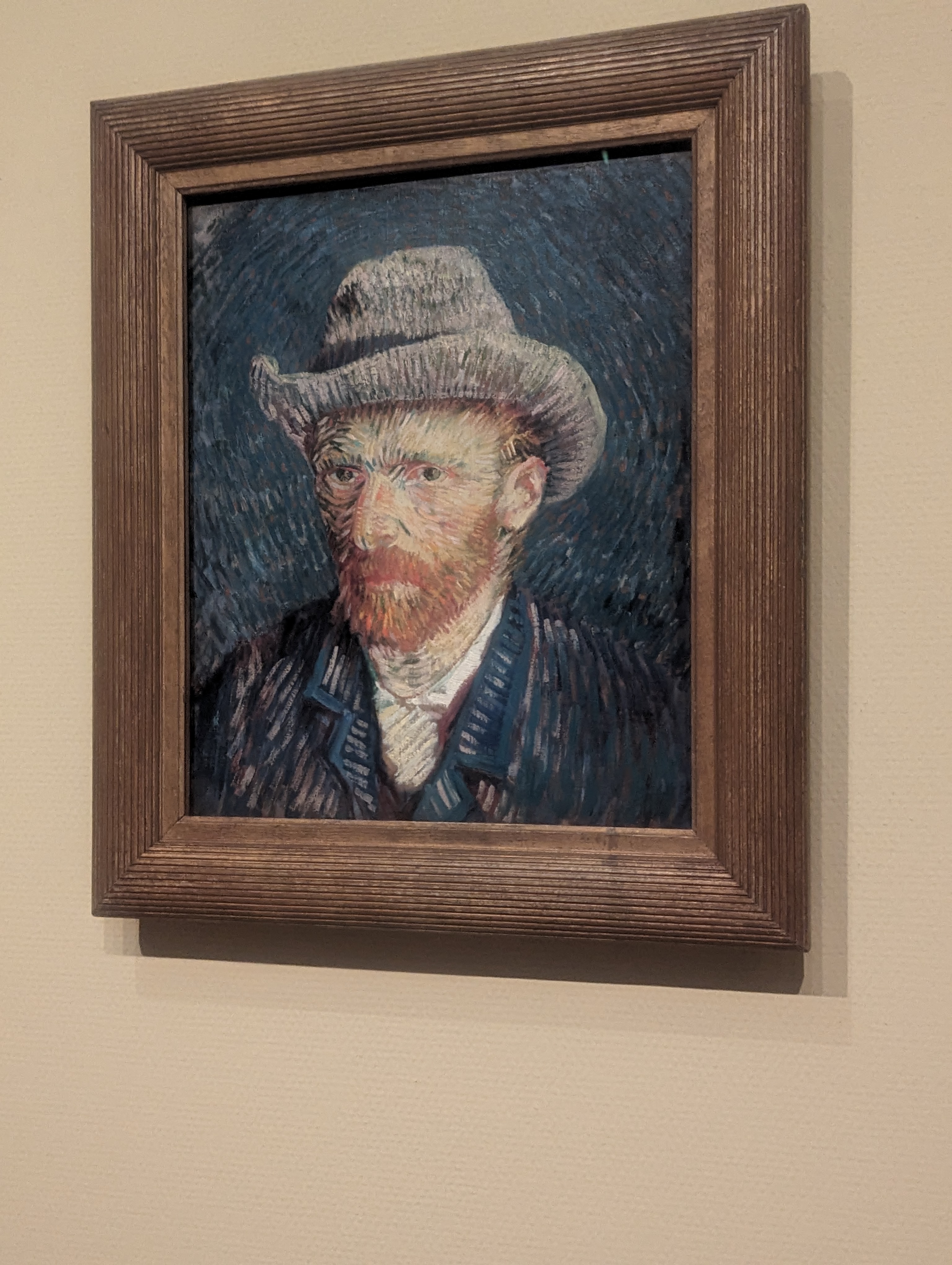

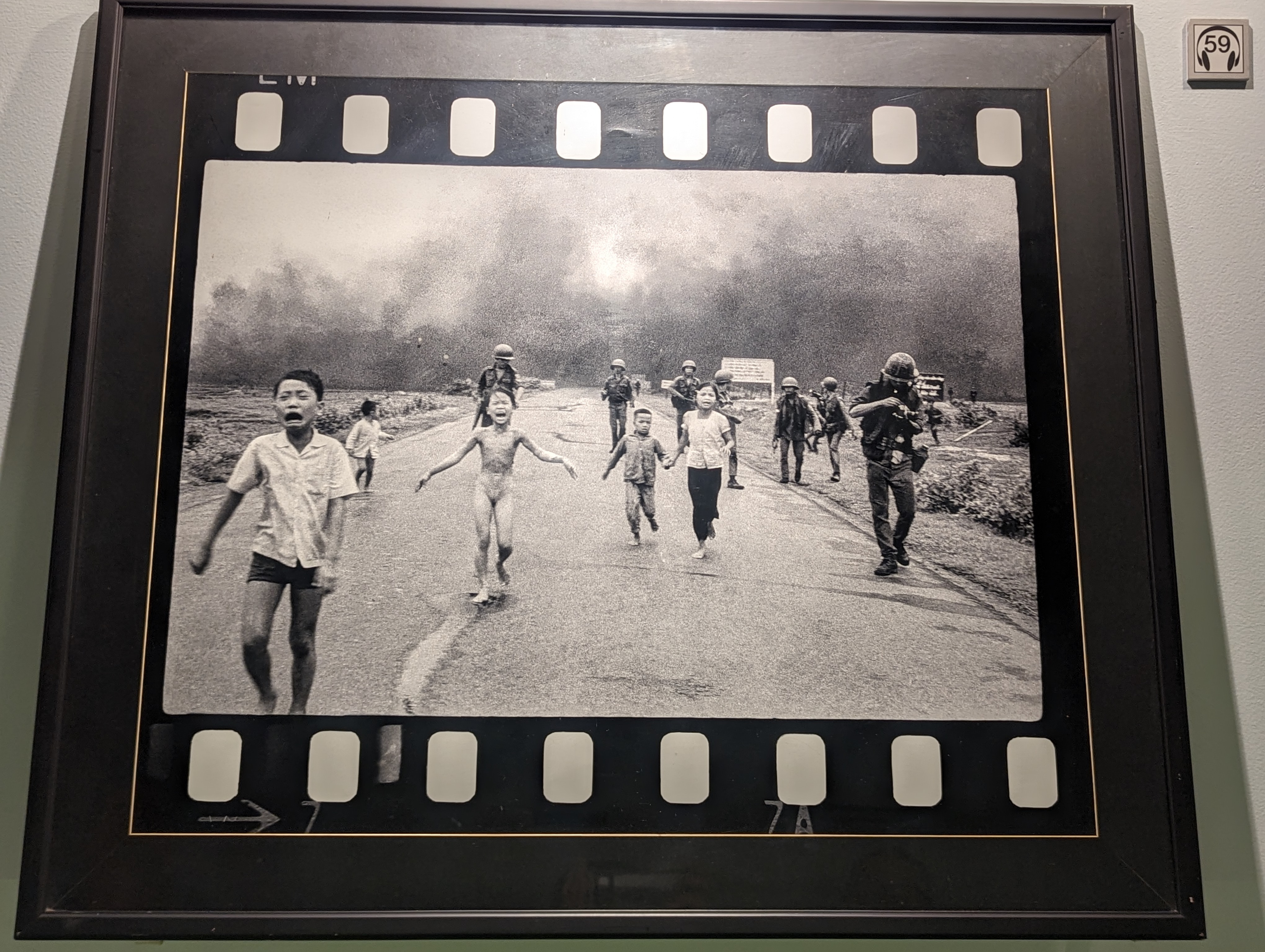

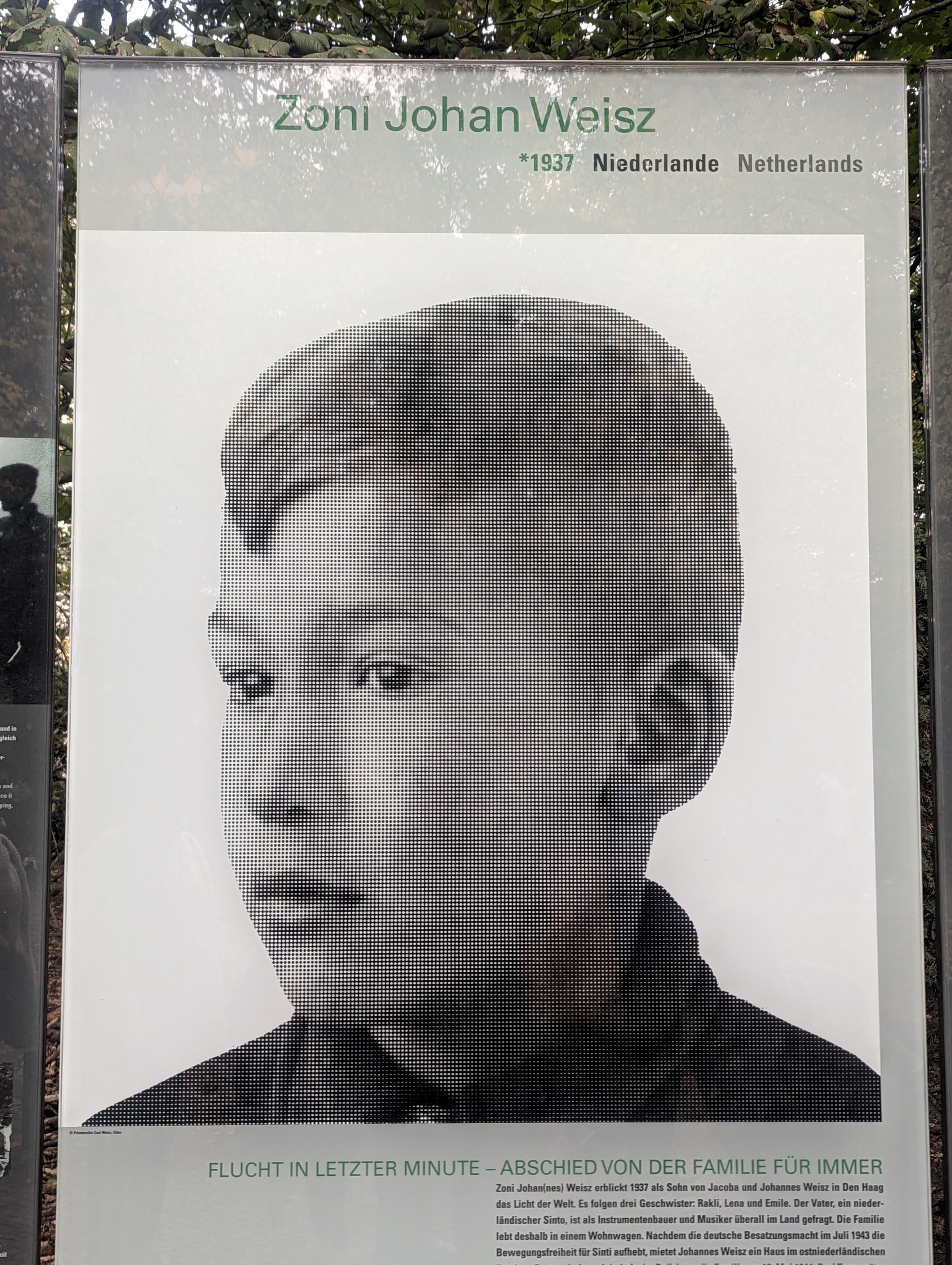

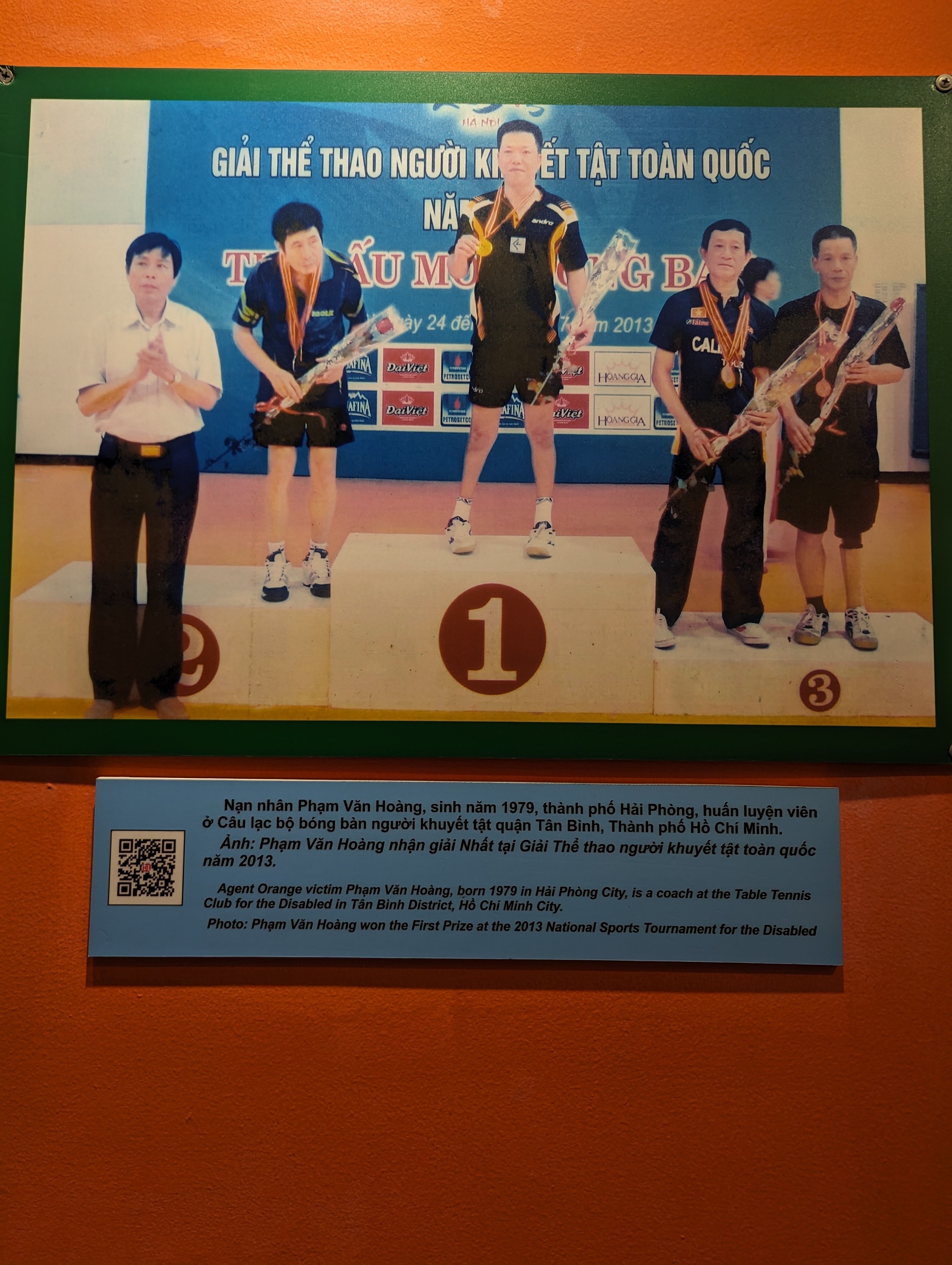

Method 3 - OpenAI CLIP as Preprocessing

From a previous project where I differentiated between pics of art and text labels, I became familiar with OpenAI’s Contrastive Label Image Pre-Training model. With this, I could define some text labels (“a painting” , “a statue” , “a real human”), give an image to CLIP, and get a prediction of these labels best matched to the input image. I’d believed this could help me as a processing step, because much of the pictures that weren’t of me were actually pictures of artwork. There were paintings, photographs, and statues in museums that sat somewhere in the median and upper/lower bounds of the distance metric: they were unavoidable if I simply adjusted the threshold by which I determined a matching picture.

I theorized that OpenAI CLIP could help me identify and separate all pictures of artwork prior to adjusting the threshold by which I defined a matching photo. I ran this model with the text labels: [ "a graffiti", "a painting", "a statue", "an artwork in a museum", "a mural", "a sculpture", "a person", "a living human being", "a picture of humans at a tourist attraction", "a person with sunglasses on"]

It identified 210 photos belong to the artwork labels. Of those, 43 were false positives (in the sense they had real humans in them). This was a diverging point in my project where I began to think soon I’d approach the point of diminishing returns for model improvement. The 43 false positives were photos of people (not always me) in artistic settings. In museums near artwork, in concert halls, in interactive digital art exhibits, etc. They weren’t necessarily photos I wanted to grab for my purposes, even if they did happen to be photos of me.

After this I scanned through the balance 255 photos that were left. I arranged them by their distance to the training photo and picked a distance I thought best captured the rest of the photos I wanted to be included as positively identified. I iterated over any file that met this distance and moved them into another new subfolder dfv_indicated_increased_thresh. In this folder there are 91 photos and only 9 aren’t of me. This leaves 164 photos that were categorized as not me by omission - and of this only 48 are false negatives, but again these were generally usually photos where I was not looking directly at the camera, or had sunglasses on, or there was some camera glare.

Conclusion & Final Thoughts

From the folders of photos I successfully categorized as being of me, I appended all their file names to a list that was saved as a .txt file. There are some false negatives that I manually added to this list. I used Android Debug Bridge and some command line magic to move all these files from my main phone gallery folder in a subfolder. Now when I’m prompted to use a photo of me for something, I’ll have roughly 750 pics to choose from without scrolling past all the pictures of pasta I’ve taken in between.

This was a neat little way to solve a strange and very first world problem. If I went about this again there’s some other things I’d do:

- I’d try to apply grayscale as a preprocessing step to see if that boosted performance in any regard. It’s usually not necessary for modern detector and vector-embeddings models, but can boost performance for photos with extreme lighting conditions (of which I have a few)

- I’d use a smaller vector embedding models than VGG-Face (used in Method 1). This is a default of DeepFace that I hadn’t checked, but having more dimensionality to vector-embeddings can have diminishing returns. It tends to make the representation noisy and not fit for calculating distances - which is exactly what I used it for. Research has shown high accuracy in facial recognition can be achieved with as little as 128 dimensions.

- It might have been interesting or more efficient if I organized this project as a classification problem. I could have labeled some photos of me, some that weren’t me, and for any new photo determine what class it belong to by which group it’s closest to - effectively a k-means cluster or k-nearest-neighbors solution.

Some thoughts on facial recognition and limitations I encountered:

- Lack of diversity in tech impacting algorithm training data has previously been reported to impact how these types of models perform with POC. I did see myself being mistaken for other Asian men across my photos.

- Sunglasses have been shown to drastically reduce the efficiency of facial verification - I wear sunglasses across a lot of my photos.

Either way, all my pics are sorted on my phone now. If you ever have this niche problem, hopefully you can take and adapt my Jupyter Notebook to your needs!